AI for Coding: The Natural Next Step We've Been Building Towards All Along

By Stephen Kearney

In 1843, Ada Lovelace wrote what’s widely considered the first computer program - an algorithm for Charles Babbage’s Analytical Engine to compute Bernoulli numbers. She wrote it by hand, in meticulous notation, for a machine that was never actually built.

In 2025, I described a business application in plain English and an AI wrote the code, tested it, and deployed it to a production environment while I watched.

These two moments are separated by 182 years, but they’re connected by a single thread: every generation of programmers has built tools that let the next generation work at a higher level of abstraction. AI-assisted coding isn’t a revolution. It’s the next step in an evolution that’s been underway since the beginning.

The Pattern We Keep Repeating

The history of programming is the history of abstraction - of creating layers that let people focus on what they want to accomplish rather than the mechanical details of how.

Machine code and assembly (1940s-50s). The first programmers worked directly with the hardware. Flip switches. Punch cards. Instructions written in binary or, eventually, assembly language - cryptic mnemonics that mapped directly to what the processor could do. To add two numbers, you told the processor exactly which registers to use and which operation to perform.

High-level languages (1950s-60s). Fortran, COBOL, and LISP let programmers express ideas closer to human thinking. Instead of managing registers, you could write something resembling a mathematical formula or a business rule. “COMPUTE TOTAL-PAY = HOURS-WORKED * PAY-RATE” was suddenly possible. A compiler translated that into the machine code the processor needed.

At the time, plenty of assembly programmers thought this was cheating. That real programmers needed to understand the hardware. That abstracting away the details would produce inferior results. Sound familiar?

Structured and object-oriented programming (1970s-90s). Languages like C, then C++, Java, and Python, introduced new ways to organise code that matched how humans think about problems - objects, classes, modules. The abstractions got richer. The distance between human intent and machine execution grew wider.

Frameworks and libraries (2000s-present). Modern developers rarely write everything from scratch. They compose applications from pre-built components. A web application in 2024 might use React for the interface, Express for the server, PostgreSQL for the database, and dozens of libraries for everything from authentication to payment processing. The developer’s job shifted from “write all the code” to “orchestrate the right components.”

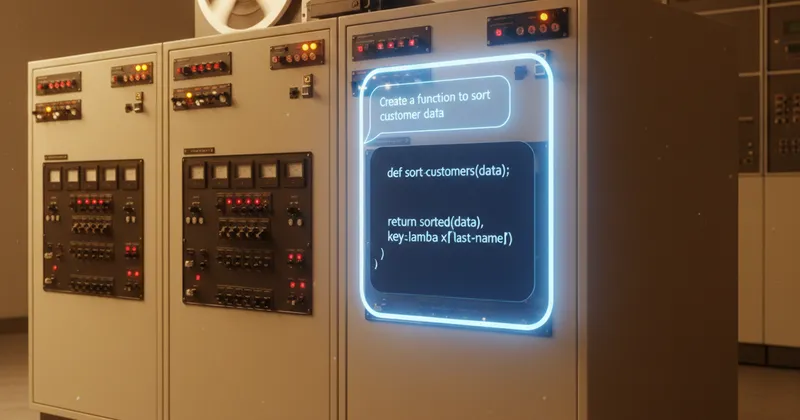

AI-assisted development (now). And here we are. The next layer of abstraction. Instead of manually selecting and composing libraries, you describe what you want and an AI generates the code. You review it, refine it, and guide it - but the mechanical act of translating intent into syntax is increasingly handled by the machine.

The pattern is consistent across every era: each new layer lets developers work at a higher level of abstraction, each layer is initially resisted by practitioners of the previous approach, and each layer ultimately makes software development more accessible and more productive.

What AI Coding Actually Looks Like in Practice

Let me ground this in what I experience daily, because the discourse around AI coding tends toward extremes - either “AI writes perfect code” or “AI code is garbage.”

The reality is more nuanced and more interesting.

Scaffolding and boilerplate. AI is exceptionally good at generating the repetitive structural code that every project needs. Setting up a new API endpoint, creating a database model, writing the configuration files for a build tool - tasks that are necessary but not creative. This is where AI saves the most time with the least risk.

First drafts of business logic. When I describe a workflow - “when a maintenance request is submitted, check the priority, assign it to the on-call technician, send a notification, and create a follow-up task if it’s not resolved within 24 hours” - AI produces a reasonable first implementation. It’s rarely perfect, but it’s a solid starting point that’s much faster to refine than building from scratch.

Debugging and explanation. “Why is this function returning null when the input is valid?” AI excels at pattern-matching against common errors and explaining what’s going wrong. It’s like having a colleague who’s read every Stack Overflow answer - not always right, but usually pointing in the right direction.

Documentation and tests. Writing tests and documentation are tasks that developers notoriously skip when pressed for time. AI lowers the barrier enough that these actually get done. Generate the test suite, review it for completeness, add edge cases the AI missed. The result is better-tested code than most projects would otherwise have.

The Reality Check: More Code Isn’t Necessarily Better Code

Here’s where I get honest about the limitations, because they matter.

AI generates code fast. Incredibly fast. And that speed is seductive. You can produce in an afternoon what used to take a week. But volume and quality are different things.

AI code tends toward the obvious. It generates solutions that match common patterns from its training data. For standard problems, that’s fine - the common pattern is common because it works. For unusual requirements, edge cases, or performance-critical systems, the obvious solution may not be the right one.

Compounding errors are real. When AI generates a function that’s 90% correct, and that function calls another function that’s 90% correct, the overall reliability compounds downward. In a complex system with dozens of AI-generated components, that compounding effect means bugs hide in the interactions between components, not just within them.

Code review becomes more important, not less. In a traditional development workflow, the person writing the code understands it deeply because they reasoned through every line. With AI-generated code, the reviewer needs to develop that understanding after the fact. This requires a different skill set - reading and evaluating code critically rather than writing it from first principles.

The Ambiguity Problem

The deepest challenge with AI-assisted coding isn’t technical - it’s linguistic. Programming has always been about translating human intent into machine-executable instructions. The gap between what we mean and what we say has always been the primary source of bugs.

AI doesn’t close that gap. It shifts it.

When you write code manually, the ambiguity in your thinking gets resolved as you work through the implementation details. “Handle the error” becomes “catch the specific exception, log it with context, return a meaningful message to the user, and retry the operation if it’s transient.” The act of writing code forces you to be precise.

When you describe what you want to an AI, the ambiguity in your description gets resolved by the AI’s assumptions. And those assumptions might not match your intent. “Handle the error” might produce code that silently swallows exceptions. Or retries infinitely. Or logs a message nobody will ever see.

The skill of working with AI coding tools is largely the skill of being precise about what you want - and recognising when the AI’s assumptions don’t match your intent. That’s a different skill from writing code, but it’s not necessarily an easier one.

The Democratisation Question

One of the most significant implications of AI-assisted coding is who gets to build software.

Historically, building a custom application required either learning to code (a significant time investment) or hiring someone who could (a significant financial investment). Low-code platforms like Power Apps reduced the second barrier considerably. AI coding tools are reducing the first.

A business analyst who understands the problem domain but can’t write Python can now describe what they need and get working code. A small business owner who can’t afford a development team can build a basic tool using AI assistance. A subject matter expert can prototype their ideas without waiting for a developer’s availability.

This is genuinely democratising. And, like every previous wave of democratisation in computing, it makes some practitioners uncomfortable. The assembly programmers didn’t love compilers. The custom-code purists didn’t love frameworks. And some professional developers don’t love the idea that their specialised skill is becoming more accessible.

But the historical pattern is clear: when the barrier to creating software drops, more software gets created, and the demand for skilled practitioners increases rather than decreasing. There’s more software in the world than ever, and more developers employed than ever. Accessibility and expertise coexist.

What Comes Next

If the pattern holds - and I see no reason it won’t - the abstraction layer will continue rising. We’re moving from “describe the code you want” toward “describe the outcome you want.” The gap between business intent and working software will narrow further.

That doesn’t mean programming disappears. It means programming changes, as it always has. The skills that matter shift upward: system design, architecture, understanding trade-offs, evaluating quality, and - perhaps most importantly - being precise about what you actually want.

Ada Lovelace wrote her algorithm by hand for a machine that didn’t exist yet. She was translating mathematical intent into mechanical instructions, bridging the gap between human thinking and machine execution.

We’re still doing exactly that. We’re just doing it at a much higher level of abstraction. And every generation that’s made that leap has built something better than what came before.